🧑🦯 Be My Eyes introduces AI app to help the visually impaired

Be My Eyes' new AI Virtual Volunteer brings instant visual help, making daily tasks easier and boosting independence for visually impaired users.

Share this story!

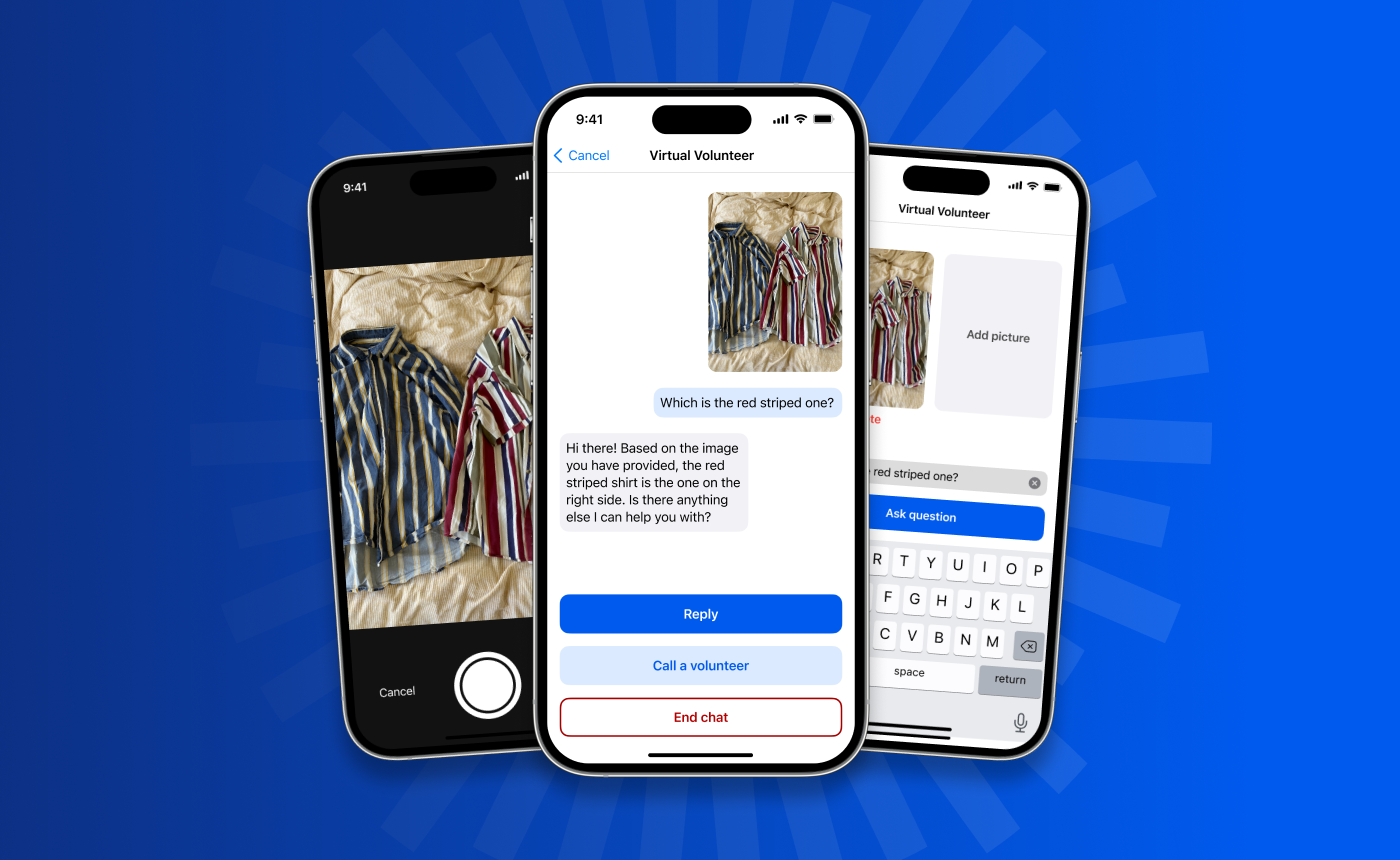

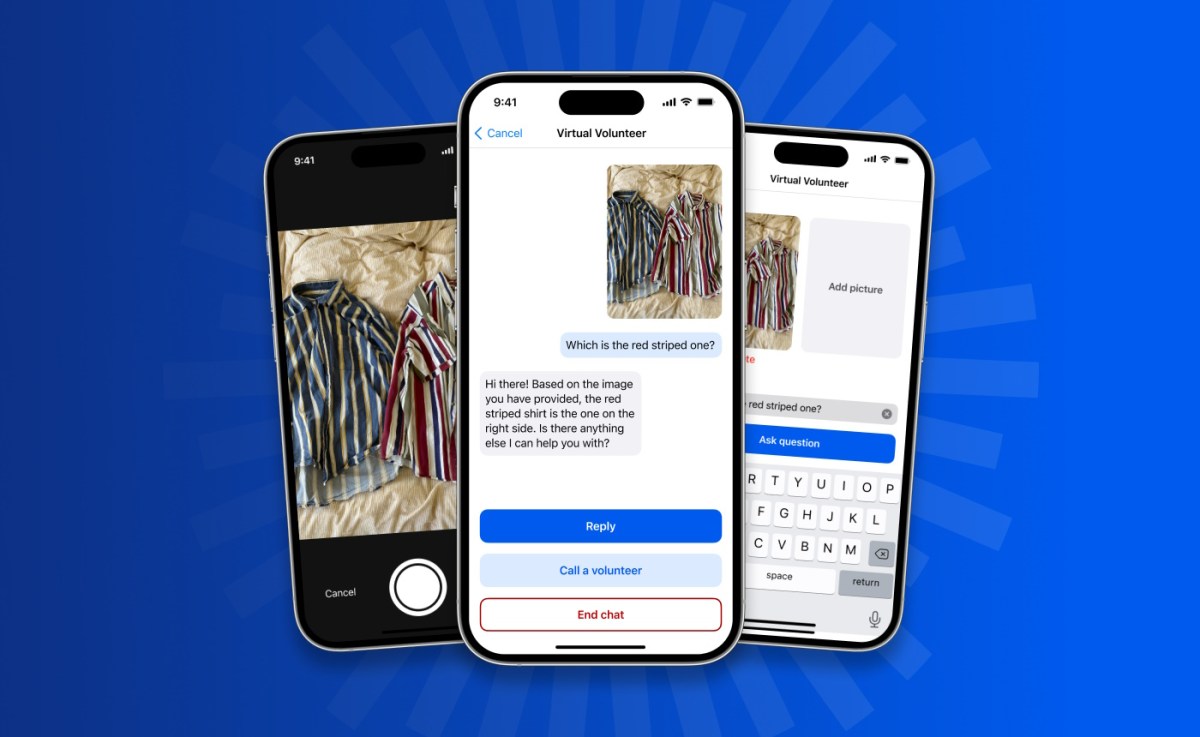

Be My Eyes, an app that connects blind and low vision users with sighted volunteers to assist with everyday tasks, is introducing a "virtual volunteer" feature powered by artificial intelligence (AI).

Integrating GPT-4's multimodal capability

The new version of the app is the first to integrate GPT-4's multimodal capability, allowing it to not only chat intelligibly but also inspect and understand images.

Users can send images via the app to an AI-powered Virtual Volunteer, which will answer any question about the image and provide instantaneous visual assistance for various tasks.

Context-aware AI assistance

What sets the Virtual Volunteer tool apart from other image-to-text technology available today is context, with a deeper level of understanding and conversational ability not yet seen in the digital assistant field.

The tool can offer suggestions for recipes based on the contents of a user's refrigerator, for example, and provide a step-by-step guide on how to make them.

Human volunteers remain essential

If and when the tool is unable to answer a question, it will automatically offer users the option to be connected via the app to a sighted volunteer for assistance. Human volunteers will continue to play an essential role in the Be My Eyes app.

Expanding accessibility for businesses

The new feature also aims to offer a way for businesses to better serve their customers by prioritizing accessibility. Be My Eyes plans to begin beta testing the feature with corporate customers in the coming weeks, with broader availability later this year.

Closed beta testing and future development

Currently in a closed beta, the feature is being tested for feedback among a small subset of users. The team at Be My Eyes is working closely with its community and OpenAI to define and guide the tool's capabilities as its development continues.

Read more:

By becoming a premium supporter, you help in the creation and sharing of fact-based optimistic news all over the world.