💡 The method that makes AI learn more easily

Nvidia researchers have found a way to teach an AI to recognize images that require only a fraction of the training images normally needed.

Share this story!

To teach an AI to recognize images, you normally need 50,000–100,000 images that the AI can train on to learn to recognize a cat, for example. Getting so many pictures together is time consuming and impossible at worst. But now researchers at Nvidia have come up with a way to reduce the number of images needed.

Using a technology called Adaptive Discriminator Augmentation, Ada, researchers have been able to train an AI with only 1,500 images. The researchers estimate that there will normally be a need for 10-20 times fewer training images with Ada.

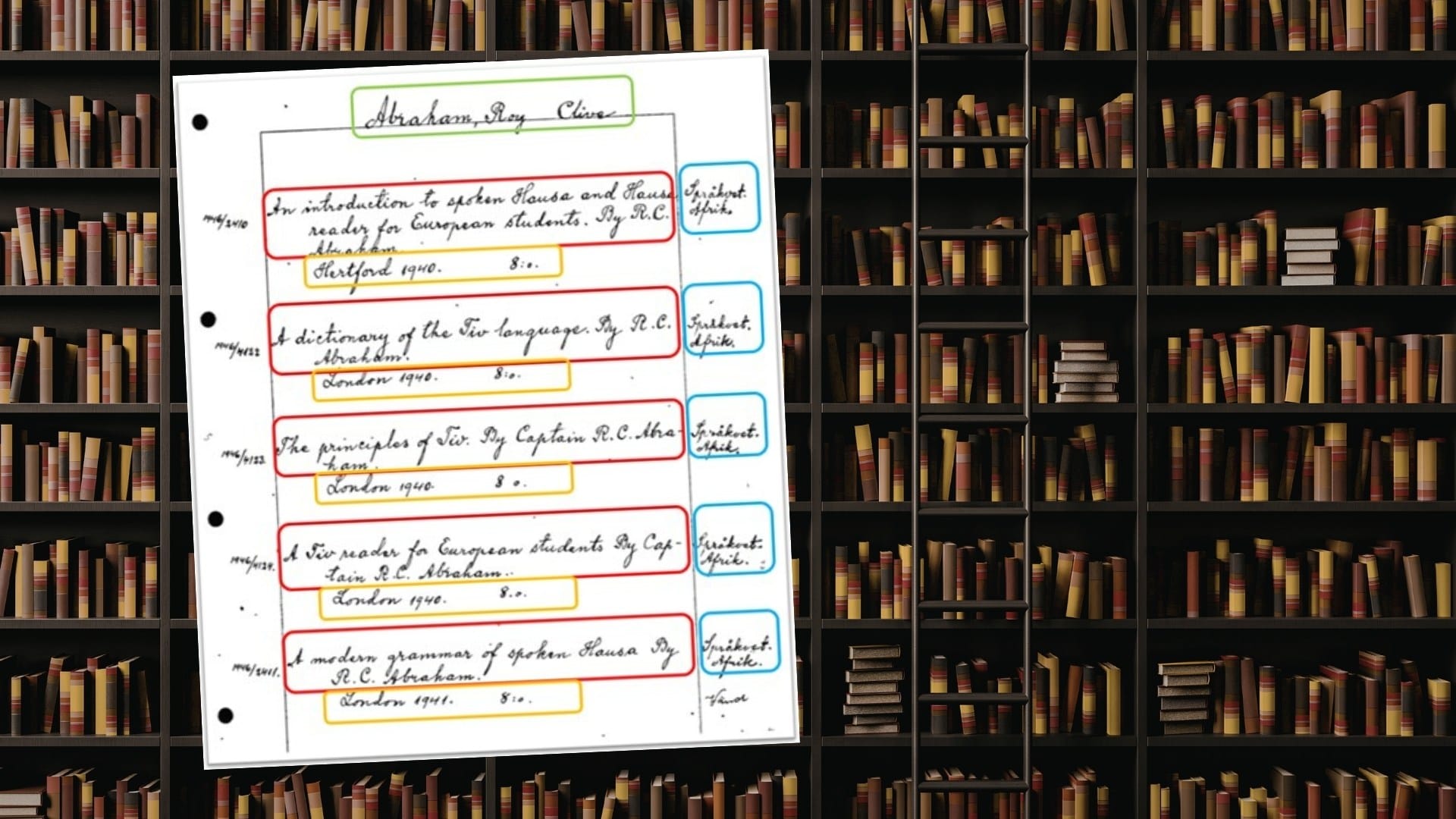

The new method makes it possible to train the AI to recognize images for which there was previously not enough data. The researchers started by letting the AI go through images of famous artists in order to then be able to create works of art in their style. But the method can be used for much more.

Among other things, the researchers expect that the method will give AI the opportunity to recognize medical images of unusual diseases. In this way, AI can help doctors diagnose diseases they may never have seen before.

Simply put, we can say that Ada creates its own images to give the AI a larger selection to train on. Ada takes the pictures that are available and distorts them in different ways. It can be about changing colors, rotating the image, removing parts of the image and more.

These images are then run through a generative adversarial network, Gan, which is a traditional AI method for image recognition. With Ada, Gan gets enough pictures to be able to train the AI. Ada also makes sure that the AI does not think that the distortions represent the images that it should recognize.

By becoming a premium supporter, you help in the creation and sharing of fact-based optimistic news all over the world.